This document identifies the steps needed to stand up and configure the reporting stack for a new server.

NOTE: Before you get started, the OpenLMIS server needs to have a user with username "admin".

NOTE: Step-by-step instruction can be found on the page Set up the reporting stack step-by-step

Clone the OpenLMIS-ref-distro GitHub repository into your local developer environment. We assume your environment already has Docker installed.

git clone https://github.com/openlmis/openlmis-ref-distro |

Once cloned update your settings.env file as is a standard practice to identify the IP address that consul will run on. Make sure to update the SCALYR_API_KEY if you're planning on using it.

Update the following configuration variables that are specific for the reporting stack:

Review and update the .env variables

Update all passwords (Note: If the database username/password is updated, update the database SQLALCHEMY_DATABASE_URI)

Update the domain names for superset and Nifi in /etc/hosts file

Start the services:

# Destroy any existing volumes docker-compose down -v # Build and start the services docker-compose up --build # If not using Scalyr, you should use: # docker-compose up --build -d --scale scalyr=0 |

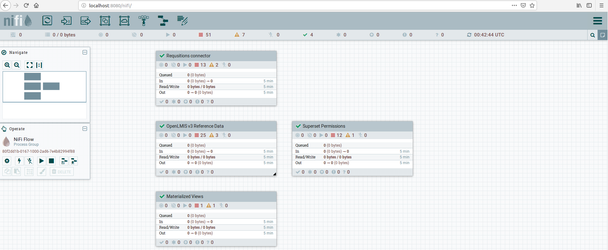

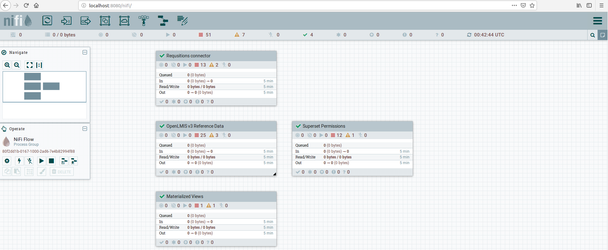

Now, we need to update Nifi with the appropriate BASE URL and secrets to properly extract information and store it.

NB: If it's the first time running the reporting stack, and you'd like to get data to view immediately, follow the steps bellow:

|

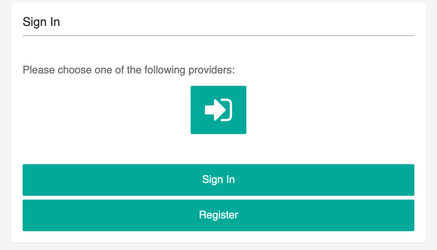

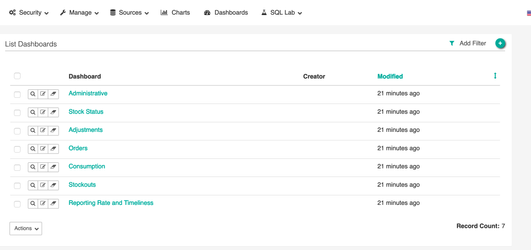

Now, we need to load superset to be able get the data from the Postgres database and load charts and dashboards

sign in button which will then prompt you for authentication and authorization.

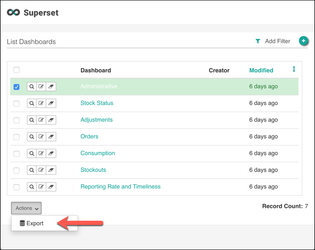

After making changes to a dashboard, administrators may wish to backup its definition to a flat file. This may be done as shown below.

Some browsers prevent the download of .json files by default. If you aren't presented with a Save As dialog, ensure that your browser isn't blocking popups. |

Notes about Importing the Backup File

If you export dashboard 123 built off of datasource 456 and try to load it into a fresh instance of superset, you’ll have a lot of broken pointers because that fresh instance of superset will try to start with ids of 1 for both.

For the reporting stack, we built everything off of a “fresh” instance of superset, so when the content is imported it doesn’t encounter this issue.

It should be possible to edit the .json file by hand to fix the broken pointers, although we have not not tried this yet and don't know precisely where they'll be encountered.